嵌入向量

嵌入在LlamaIndex中用于通过复杂的数值表示来呈现您的文档。嵌入模型接收文本作为输入,并返回一长串数字,这些数字用于捕捉文本的语义。这些嵌入模型经过训练以这种方式表示文本,并有助于实现许多应用,包括搜索!

从高层次来看,如果用户询问关于狗的问题,那么该问题的嵌入向量将与讨论狗的内容高度相似。

在计算嵌入向量之间的相似度时,有多种方法可供使用(点积、余弦相似度等)。默认情况下,LlamaIndex在比较嵌入向量时使用余弦相似度。

有许多嵌入模型可供选择。默认情况下,LlamaIndex使用来自OpenAI的text-embedding-ada-002。我们还支持Langchain此处提供的任何嵌入模型,同时提供了一个易于扩展的基类用于实现您自己的嵌入。

在LlamaIndex中,嵌入模型通常会在Settings对象中指定,然后在向量索引中使用。该嵌入模型将用于嵌入索引构建期间使用的文档,以及嵌入您稍后使用查询引擎进行的任何查询。您还可以为每个索引单独指定嵌入模型。

如果您尚未安装嵌入向量:

pip install llama-index-embeddings-openai然后:

from llama_index.embeddings.openai import OpenAIEmbeddingfrom llama_index.core import VectorStoreIndexfrom llama_index.core import Settings

# changing the global defaultSettings.embed_model = OpenAIEmbedding()

# local usageembedding = OpenAIEmbedding().get_text_embedding("hello world")embeddings = OpenAIEmbedding().get_text_embeddings( ["hello world", "hello world"])

# per-indexindex = VectorStoreIndex.from_documents(documents, embed_model=embed_model)为了节省成本,您可能希望使用本地模型。

pip install llama-index-embeddings-huggingfacefrom llama_index.embeddings.huggingface import HuggingFaceEmbeddingfrom llama_index.core import Settings

Settings.embed_model = HuggingFaceEmbedding( model_name="BAAI/bge-small-en-v1.5")这将使用来自Hugging Face的一个性能良好且快速的默认设置。

您可以在下方找到更多使用详情和可用的自定义选项。

嵌入模型最常见的用法是将其设置在全局 Settings 对象中,然后使用它来构建索引和查询。输入文档将被分解为节点,嵌入模型将为每个节点生成一个嵌入向量。

默认情况下,LlamaIndex将使用text-embedding-ada-002,下面的示例为您手动设置了此配置。

from llama_index.core import VectorStoreIndex, SimpleDirectoryReaderfrom llama_index.embeddings.openai import OpenAIEmbeddingfrom llama_index.core import Settings

# global defaultSettings.embed_model = OpenAIEmbedding()

documents = SimpleDirectoryReader("./data").load_data()

index = VectorStoreIndex.from_documents(documents)然后,在查询时,将再次使用嵌入模型来嵌入查询文本。

query_engine = index.as_query_engine()

response = query_engine.query("query string")默认情况下,嵌入请求会以每批10个的方式发送至OpenAI。对部分用户而言,这可能(偶尔)会触发速率限制。而对需要嵌入大量文档的用户来说,这个批次大小可能过小。

# set the batch size to 42embed_model = OpenAIEmbedding(embed_batch_size=42)使用本地模型最简单的方式是通过 HuggingFaceEmbedding 从 llama-index-embeddings-huggingface 中调用:

# pip install llama-index-embeddings-huggingfacefrom llama_index.embeddings.huggingface import HuggingFaceEmbeddingfrom llama_index.core import Settings

Settings.embed_model = HuggingFaceEmbedding( model_name="BAAI/bge-small-en-v1.5")它加载了 BAAI/bge-small-en-v1.5 嵌入模型。您可以使用 Hugging Face 上的任何 Sentence Transformers 嵌入模型。

除了HuggingFaceEmbedding构造函数中可用的关键字参数外,其他关键字参数会传递给底层的SentenceTransformer实例,例如backend、model_kwargs、truncate_dim、revision等。

ONNX 或 OpenVINO 优化

Section titled “ONNX or OpenVINO optimizations”LlamaIndex 还支持使用 ONNX 或 OpenVINO 来加速本地推理,通过依赖 Sentence Transformers 和 Optimum 实现。

一些先决条件:

pip install llama-index-embeddings-huggingface# Plus any of the following:pip install optimum[onnxruntime-gpu] # For ONNX on GPUspip install optimum[onnxruntime] # For ONNX on CPUspip install optimum-intel[openvino] # For OpenVINO创建时指定模型和输出路径:

from llama_index.embeddings.huggingface import HuggingFaceEmbeddingfrom llama_index.core import Settings

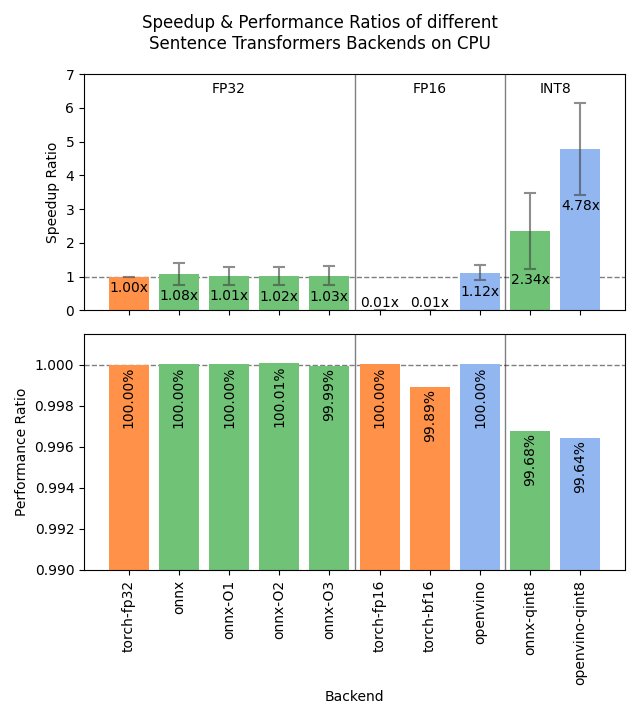

Settings.embed_model = HuggingFaceEmbedding( model_name="BAAI/bge-small-en-v1.5", backend="onnx", # or "openvino")如果模型仓库中尚未包含ONNX或OpenVINO模型,则将使用Optimum自动进行转换。 有关各种选项的性能基准,请参阅Sentence Transformers文档。

如果我想使用优化或量化后的模型检查点该怎么办?

It's common for embedding models to have multiple ONNX and/or OpenVINO checkpoints, for example sentence-transformers/all-mpnet-base-v2 with 2 个 OpenVINO 检查点 and 9个ONNX检查点. See the Sentence Transformers 文档 for more details on each of these options and their expected performance.您可以在 model_kwargs 参数中指定 file_name 来加载特定检查点。例如,要从 sentence-transformers/all-mpnet-base-v2 模型仓库加载 openvino/openvino_model_qint8_quantized.xml 检查点:

from llama_index.embeddings.huggingface import HuggingFaceEmbeddingfrom llama_index.core import Settings

quantized_model = HuggingFaceEmbedding( model_name="sentence-transformers/all-mpnet-base-v2", backend="openvino", device="cpu", model_kwargs={"file_name": "openvino/openvino_model_qint8_quantized.xml"},)Settings.embed_model = quantized_model在CPU上我应该使用哪个选项?

如Sentence Transformers基准测试所示,OpenVINO量化至int8(openvino_model_qint8_quantized.xml)具有极高的性能,仅需牺牲少量精度。若需确保结果完全一致,则基础的backend=“openvino”或backend=“onnx”可能是最佳选择。

给定此查询和这些文档,以下是使用 int8 量化的 OpenVINO 与默认 Hugging Face 模型获得的结果:

query = "Which planet is known as the Red Planet?"documents = [ "Venus is often called Earth's twin because of its similar size and proximity.", "Mars, known for its reddish appearance, is often referred to as the Red Planet.", "Jupiter, the largest planet in our solar system, has a prominent red spot.", "Saturn, famous for its rings, is sometimes mistaken for the Red Planet.",]HuggingFaceEmbedding(device='cpu'):- Average throughput: 38.20 queries/sec (over 5 runs)- Query-document similarities tensor([[0.7783, 0.4654, 0.6919, 0.7010]])

HuggingFaceEmbedding(backend='openvino', device='cpu', model_kwargs={'file_name': 'openvino_model_qint8_quantized.xml'}):- Average throughput: 266.08 queries/sec (over 5 runs)- Query-document similarities tensor([[0.7492, 0.4623, 0.6606, 0.6556]])在保持相同文档排序的同时,实现了6.97倍的加速。

点击查看重现脚本

import timefrom llama_index.embeddings.huggingface import HuggingFaceEmbeddingfrom llama_index.core import Settings

quantized_model = HuggingFaceEmbedding( model_name="sentence-transformers/all-mpnet-base-v2", backend="openvino", device="cpu", model_kwargs={"file_name": "openvino/openvino_model_qint8_quantized.xml"},)quantized_model_desc = "HuggingFaceEmbedding(backend='openvino', device='cpu', model_kwargs={'file_name': 'openvino_model_qint8_quantized.xml'})"baseline_model = HuggingFaceEmbedding( model_name="sentence-transformers/all-mpnet-base-v2", device="cpu",)baseline_model_desc = "HuggingFaceEmbedding(device='cpu')"

query = "Which planet is known as the Red Planet?"

def bench(model, query, description): for _ in range(3): model.get_agg_embedding_from_queries([query] * 32)

sentences_per_second = [] for _ in range(5): queries = [query] * 512 start_time = time.time() model.get_agg_embedding_from_queries(queries) sentences_per_second.append(len(queries) / (time.time() - start_time))

print( f"{description:<120}: Avg throughput: {sum(sentences_per_second) / len(sentences_per_second):.2f} queries/sec (over 5 runs)" )

bench(baseline_model, query, baseline_model_desc)bench(quantized_model, query, quantized_model_desc)

# Example documents for similarity comparison. The first is the correct one, and the rest are distractors.docs = [ "Mars, known for its reddish appearance, is often referred to as the Red Planet.", "Venus is often called Earth's twin because of its similar size and proximity.", "Jupiter, the largest planet in our solar system, has a prominent red spot.", "Saturn, famous for its rings, is sometimes mistaken for the Red Planet.",]

baseline_query_embedding = baseline_model.get_query_embedding(query)baseline_doc_embeddings = baseline_model.get_text_embedding_batch(docs)

quantized_query_embedding = quantized_model.get_query_embedding(query)quantized_doc_embeddings = quantized_model.get_text_embedding_batch(docs)

baseline_similarity = baseline_model._model.similarity( baseline_query_embedding, baseline_doc_embeddings)print( f"{baseline_model_desc:<120}: Query-document similarities {baseline_similarity}")quantized_similarity = quantized_model._model.similarity( quantized_query_embedding, quantized_doc_embeddings)print( f"{quantized_model_desc:<120}: Query-document similarities {quantized_similarity}")在GPU上我应该使用哪个选项?

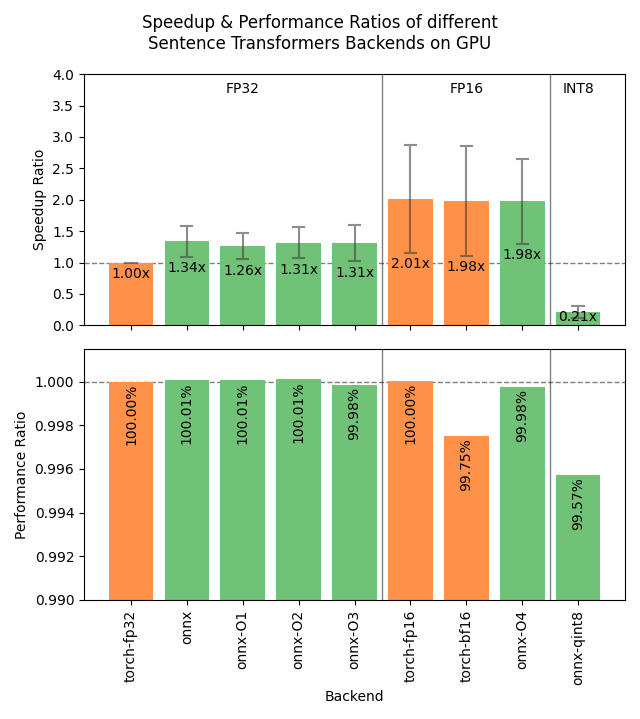

在GPU上,OpenVINO并不特别有优势,而ONNX也不一定优于在默认torch后端运行的量化模型。

这意味着您无需额外的依赖项即可在GPU上实现显著的加速,只需在加载模型时使用较低的精度:

from llama_index.embeddings.huggingface import HuggingFaceEmbeddingfrom llama_index.core import Settings

Settings.embed_model = HuggingFaceEmbedding( model_name="BAAI/bge-small-en-v1.5", device="cuda", model_kwargs={"torch_dtype": "float16"},)如果我想要的模型没有我期望的后端和优化或量化怎么办?

后端导出 Hugging Face 空间可用于将任何 Sentence Transformers 模型转换为 ONNX 或 OpenVINO,并应用量化或优化。这将在模型仓库中创建一个包含转换后模型文件的拉取请求。然后您可以通过指定 revision 参数在 LlamaIndex 中使用此模型,如下所示:

from llama_index.embeddings.huggingface import HuggingFaceEmbeddingfrom llama_index.core import Settings

Settings.embed_model = HuggingFaceEmbedding( model_name="BAAI/bge-small-en-v1.5", backend="openvino", revision="refs/pr/16", # for pull request 16: https://huggingface.co/BAAI/bge-small-en-v1.5/discussions/16 model_kwargs={"file_name": "openvino_model_qint8_quantized.xml"},)LangChain 集成

Section titled “LangChain Integrations”我们还支持 Langchain 提供的所有嵌入模型 此处。

以下示例使用 Langchain 的嵌入类从 Hugging Face 加载模型。

pip install llama-index-embeddings-langchainfrom langchain.embeddings.huggingface import HuggingFaceBgeEmbeddingsfrom llama_index.core import Settings

Settings.embed_model = HuggingFaceBgeEmbeddings(model_name="BAAI/bge-base-en")如果您想使用LlamaIndex或Langchain未提供的嵌入模型,您也可以扩展我们的基础嵌入类并实现您自己的嵌入模型!

下面的示例使用 Instructor Embeddings (安装/设置详情请见此处),并实现了一个自定义嵌入类。Instructor embeddings 的工作原理是提供文本以及关于待嵌入文本领域的"指令"。这在嵌入来自非常具体和专业主题的文本时特别有用。

from typing import Any, Listfrom InstructorEmbedding import INSTRUCTORfrom llama_index.core.embeddings import BaseEmbedding

class InstructorEmbeddings(BaseEmbedding): def __init__( self, instructor_model_name: str = "hkunlp/instructor-large", instruction: str = "Represent the Computer Science documentation or question:", **kwargs: Any, ) -> None: super().__init__(**kwargs) self._model = INSTRUCTOR(instructor_model_name) self._instruction = instruction

def _get_query_embedding(self, query: str) -> List[float]: embeddings = self._model.encode([[self._instruction, query]]) return embeddings[0]

def _get_text_embedding(self, text: str) -> List[float]: embeddings = self._model.encode([[self._instruction, text]]) return embeddings[0]

def _get_text_embeddings(self, texts: List[str]) -> List[List[float]]: embeddings = self._model.encode( [[self._instruction, text] for text in texts] ) return embeddings

async def _aget_query_embedding(self, query: str) -> List[float]: return self._get_query_embedding(query)

async def _aget_text_embedding(self, text: str) -> List[float]: return self._get_text_embedding(text)您也可以将嵌入作为独立模块用于您的项目、现有应用程序或一般测试和探索。

embeddings = embed_model.get_text_embedding( "It is raining cats and dogs here!")我们支持与 OpenAI、Azure 以及 LangChain 提供的所有功能集成。